Key Facts (Executive Summary)

[!IMPORTANT]

- Deadline: 2 August 2026 - all law firms in Poland using AI must comply with the vast majority of the AI Act provisions.

- Educational obligation: Since 2 February 2026, the AI Literacy requirement has been in force, mandating internal procedures and AI training.

- Risk classification: Most legal tools such as LexAlpha used for research are limited-risk systems, excluding HR profiling or judicial decision support.

- Fines and GDPR: Penalties reach EUR 35 million (or 7% of turnover) for the use of prohibited AI practices - cumulation with GDPR fines is possible. The absence of a Polish supervisory authority does not exempt anyone from obligations under EU law.

Introduction: What is the AI Act and why does it concern your law firm?

2 August 2026 is a date no law firm in Poland can ignore. From that day, most provisions of the EU Regulation on Artificial Intelligence - commonly known as the AI Act - become fully applicable. This applies not only to technology companies creating AI systems but to every entity that uses such systems professionally - including law firms, barrister chambers and notarial offices.

Meanwhile, Poland has still not adopted a national act implementing the AI Act, and legislative work is delayed by over 100 days from the original schedule. In practice, this means law firms must comply with the EU regulation directly - without a national guide. Penalties for violations may be imposed even without an established supervisory authority, as lawyers are subject to other existing accountability frameworks (disciplinary liability, civil liability for damages).

This article is a practical guide for law firm partners and managing associates. You will learn:

- how the AI Act works and who it really applies to,

- whether AI tools used in your firm fall under high-risk provisions,

- what specific obligations you must fulfil before 2 August 2026,

- how to verify whether your AI provider is compliant,

- and what to do if you have not yet started preparations.

What is the AI Act and who exactly does it apply to in the legal sector?

The AI Act is Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 - the world’s first comprehensive law regulating AI systems. As an EU regulation, it applies directly throughout the EU without the need for national implementing legislation.

The regulation is based on a risk-based approach: the higher the potential risk of harm from an AI system, the stricter the legal requirements. The AI Act distinguishes four categories of systems:

| Category | Examples | Requirements |

|---|---|---|

| Unacceptable risk | Social scoring systems, subliminal manipulation | Prohibited |

| High risk | Systems in justice, HR, healthcare | Rigorous requirements, documentation, oversight |

| Limited risk | Chatbots, deepfakes, recommendation systems | Transparency obligations |

| Minimal risk | Spam filters, calculators, video games | No specific requirements |

The key question for law firms is: in which category are the AI tools you use?

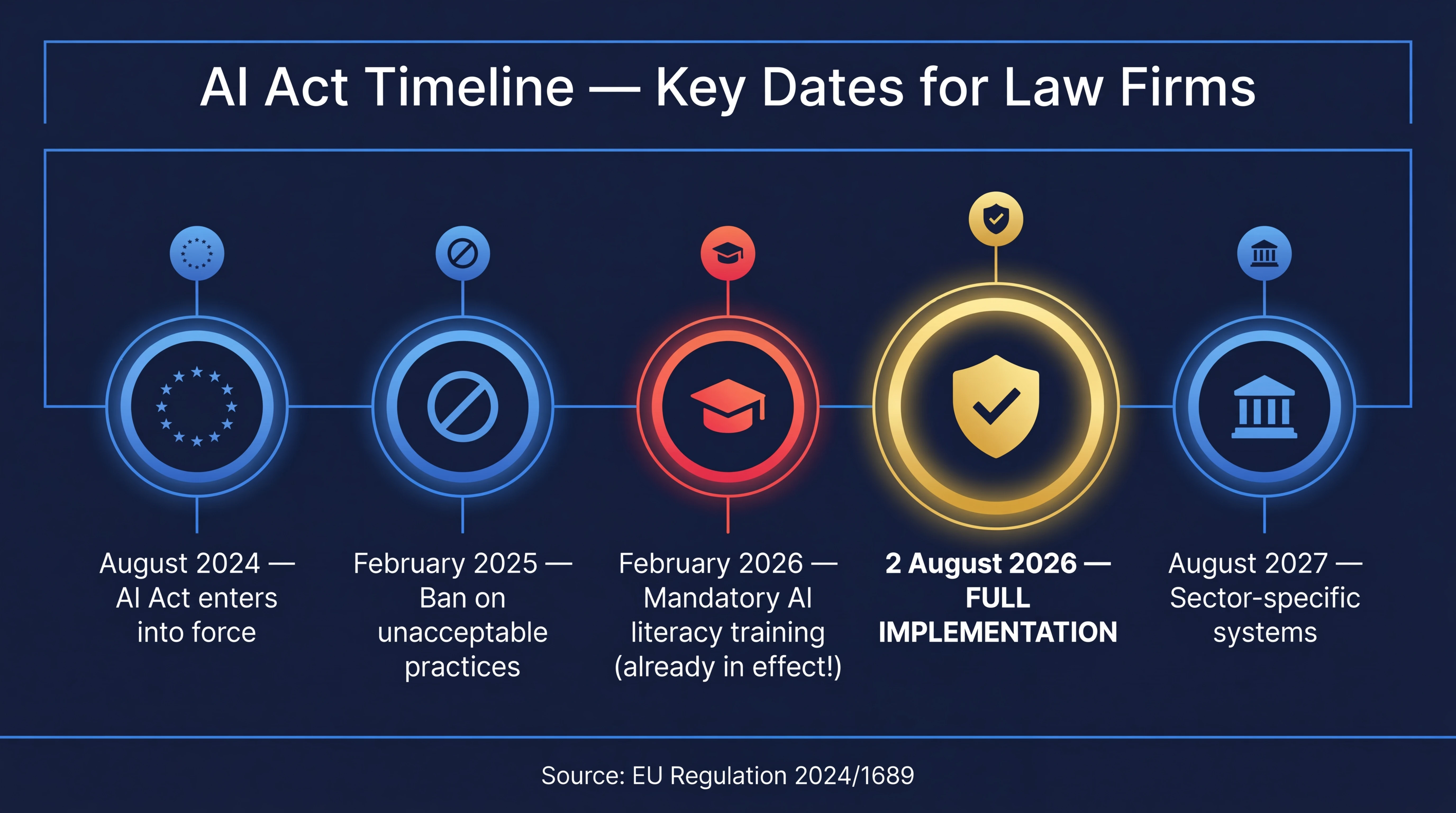

AI Act implementation timeline: where are we?

| Date | What entered into force |

|---|---|

| 1 August 2024 | AI Act enters into force as EU regulation |

| 2 February 2025 | General provisions, prohibition of unacceptable practices |

| 2 February 2026 | AI literacy obligation - employee AI training |

| 2 August 2026 | Start of application - high-risk systems, deployer obligations |

| 2 August 2027 | High-risk systems integrated with sectoral products |

As mentioned above, since 2 February 2026, Art. 4 of the AI Act has been in force - requiring employers to ensure AI competence among employees. This obligation already applies to you since 2 February. If, for example, an employee causes a breach of transparency rules or engages in prohibited practices due to lack of knowledge, the absence of prior training in the firm will be an aggravating circumstance for the employer.

Is a law firm a “deployer” under the AI Act?

A deployer (Art. 3(4) of Regulation 2024/1689) is any natural or legal person using an AI system in the course of their professional activity. This encompasses law firms using AI tools for research, drafting or document analysis. Deployer obligations are regulated by Art. 26 of the AI Act.

What risk categories apply to law firms?

Analytical tools and legal document generators (e.g. LexAlpha)

Tools assisting lawyers - AI assistants analysing contracts, generating court filings based on legislation, case law and practice of the particular firm - typically classify as low or limited risk systems, provided they do not directly affect the fundamental rights of natural persons or influence judicial decision-making (i.e. they do not support the court in applying the law to a specific set of facts and in its interpretation).

In the review-first model used by LexAlpha, the lawyer remains the author and verifier - AI is an assistant, not a decision-maker. This is inherently compliant with the human oversight requirement.

Systems supporting the administration of justice

The AI Act explicitly lists AI systems used in the administration of justice as potentially high-risk - particularly those designed for fact analysis, evidence credibility assessment, or supporting judicial decisions.

HR systems used in law firms

AI tools for recruitment, employee evaluation or employee performance monitoring are explicitly listed in Annex III as high-risk.

What specific obligations does your law firm have?

Obligations for every law firm using AI

1. AI literacy (already in force since 2 February 2026)

You must ensure that persons working with AI systems have an adequate level of knowledge. In practice:

- conduct AI training or workshops for the team,

- develop internal procedures for using AI tools,

- document that such measures have been taken.

2. AI provider verification

If you use an external AI tool, you are responsible for verifying whether your provider meets AI Act requirements.

3. Disclosure of AI use

For systems generating content (such as court filings), lawyers should be clear about when and how to inform clients about the role of AI in document preparation.

What fines apply for AI Act violations - up to EUR 35 million?

| Category of violation | Fine amount | AI Act Article |

|---|---|---|

| Use of prohibited AI systems | Up to EUR 35M or 7% of annual turnover | Art. 99(3) |

| Breach of deployer obligations (Art. 26) | Up to EUR 15M or 3% of turnover | Art. 99(4) |

| Providing false information to authorities | Up to EUR 7.5M or 1% of turnover | Art. 99(5) |

Fines can be cumulated with GDPR sanctions, potentially leading to combined exposure exceeding EUR 50 million.

Compliance checklist for law firms - what to do before 2 August 2026?

Do immediately

- AI tools inventory - list all AI systems used in the firm

- Preliminary risk classification for each tool

- AI literacy training - plan and conduct training

Within 60 days

- Provider verification - data storage location, training data policy, GDPR-compliant DPA

- Internal AI policy - who can use AI, for what purposes, how to verify results

- Oversight procedure - designate responsible person

Before 2 August 2026

- Full compliance assessment for high-risk systems

- Fundamental rights impact assessment (FRIA) for systems affecting fundamental rights

- Update contracts with AI providers

How LexAlpha helps your firm comply with the AI Act

Data does not train the AI model

Documents submitted to LexAlpha are processed only during response generation and are never saved or used for model training.

EU hosting with signed DPA

LexAlpha’s infrastructure is based on Microsoft Azure in the EU region, with a GDPR-compliant Data Processing Agreement. Data is never transferred outside the EEA.

Military-grade encryption

All data encrypted with AES-256 both at rest and in transit.

Review-first model = built-in human oversight

LexAlpha’s architecture assumes AI generates proposals, but the final decision on every element belongs to the lawyer. This is 100% consistent with the AI Act’s human oversight requirement.

Frequently Asked Questions (FAQ)

Does the AI Act apply to a sole practitioner law firm?

Yes. The AI Act applies regardless of firm size. Regulation 2024/1689 applies the principle of proportionality (Art. 25(2)) - requirements for micro-enterprises may be implemented in a simplified manner, particularly when they use ready-made low-risk solutions.

Must a law firm inform clients that documents were created with AI assistance?

Yes, you should inform the client that documents were created with AI assistance when AI generates the substantive content of a filing or contract, regardless of the requirement for full substantive review by the lawyer (human oversight). Such requirements are also often regulated in the ethical rules of the relevant professional body (bar association, legal advisors’ chamber). The AI Act does not impose a blanket obligation for all cases, but ethical rules and responsibility for document quality remain with the lawyer regardless of the tools used.

What fines apply for non-compliance with the AI Act?

The most serious violations (use of prohibited systems) carry fines of up to EUR 35 million or 7% of global annual turnover. Violations of high-risk system requirements - up to EUR 15 million or 3% of turnover. Fines can be cumulated with GDPR sanctions.

How to verify whether my AI provider meets AI Act requirements?

Ask your provider about: technical system documentation, data storage and processing policy, whether user data is used for model training, GDPR-compliant DPA, server location (EU vs outside the EEA). Professional LegalTech platforms should provide this information readily.

5 AI Act takeaways for law firm partners

- Time is working against you - less than 5 months remain, and preparations for full compliance take time.

- The absence of a Polish implementing act does not mean the absence of obligations - the AI Act applies directly as an EU regulation.

- Most legal tools are low risk - but require AI literacy and provider verification.

- Choosing the right AI provider has legal significance - the firm is responsible for the systems it uses and whether they are compliant with the AI Act.

- The review-first model is not just good practice, it is a requirement - human oversight over AI decisions is part of the AI Act.

Want to see how LexAlpha works in practice? Schedule a free presentation →

This article is for informational purposes only and does not constitute legal advice. Legal status as of March 6, 2026.

Want to see LexAlpha in action?

Schedule a free presentation and learn how AI can streamline your firm's work - in compliance with the AI Act and GDPR.

Schedule presentation → Przejdź do aplikacji

Przejdź do aplikacji